UPDATE (27/02/2018)

Some great news! It seems that that error been fixed in SQL 2014 SP2 CU3. More information here . They added following logic at the end of the replication procedures:

IF @@rowcount = 0

IF @@microsoftversion > 0x07320000

BEGIN

IF EXISTS (SELECT *

FROM sys.all_parameters

WHERE object_id = Object_id('sp_MSreplraiserror'

)

AND [name] = '@param3')

BEGIN

SET @primarykey_text =

@primarykey_text + '[TableD_ID] = '

+ CONVERT(NVARCHAR(100), @pkc1, 1)

EXEC Sp_msreplraiserror

@errorid=20598,

@param1=N'[dbo].[TableD]',

@param2=@primarykey_text,

@param3=13233

END

ELSE

EXEC Sp_msreplraiserror

@errorid=20598

END

It seems it is checking a version of SQL and if version is low it is executing proc without additional parameters.

Still if patching server is not an option for you, follow this blog post:)

END OF UPDATE

This is quite common error when you upgrade your 2014 publishers with SP2. First of all the best way of upgrading your replication infrastructure is to start with subscribers. In real world this is not always possible.

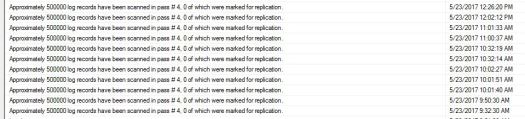

This error occurs when you apply SQL 2014 SP2 to your publishers but not to subscriber. It seems Microsoft modifying error handling (thank GOD!) for replication. Currently as you may know when u getting PK, row not found errors you only getting seqno and command id. Since 2014 SP2 the replication will give you table name and values of Primary Key (to identify the duplicate/missing row). That is great improvement as you don’t need to go to distributor to browse commands (more about browsing commands over here …. )

They added 3rd parameter to sp_MSreplraiserror procedures. Since this is systems proc you not able to update it on subscriber. The only permanent solution for this is applying SP2 to your subscriber.

However there is a workaround for this but only temporary one. First step is to script out ALL replication procs for given publication on publisher. Using following script:

sp_scriptpublicationcustomprocs @publication = ”

Once you copy output, paste it on subscriber db and replace ‘,@param3=’ with ‘- -@param3=’

As I said this is just temporary solution as these procedures may be overwritten if you make changes to some or all articles, you create new subscription or drop/recreate it etc. When you add new articles you will need to repeat process for all articles or if you like for given articles you just added.

I know it is not the best solution for this but I don’t see any other way of doing it. If you do have other ideas, let me know in comments.